Faceless Brands Are Invisible: The Founder-Led Social Strategy

Brand accounts are seeing a 30% reach drop. It’s time for a Founder-Led Social Strategy. Learn how to humanize your brand and skyrocket engagement.

As we progress through 2026, the “behind-the-scenes” of your website is just as important as the front-end. Search engines now use Large Language Models to parse code faster than ever, making structured data for AI 2026 a critical requirement for visibility. It is no longer enough to just have great text; you must provide a machine-readable “map” of your content. This guide explores how to use LLMs to automate the generation of Schema.org markup, ensuring your brand blogs and products are instantly indexed and featured in rich snippets and AI-driven answer boxes.

Use an LLM to index your existing blog posts and product pages. Provide a system prompt like: “You are an expert assistant for [Brand]. Only answer questions using the provided documentation to ensure 100% accuracy and brand alignment.”

Goal:

Make your bot a controlled brand‑specific assistant, not a free‑form web‑searcher.

Approach:

Feed your LLM all existing blog posts, product‑page copy, and FAQs (via a RAG‑style index or a “knowledge base” in your chat‑platform).

System prompt template:

“You are an expert assistant for [Brand]. Only answer questions using the provided documentation to ensure 100% accuracy and strict brand alignment. If the documentation does not contain an answer, respond that the information is not available and suggest a related FAQ or support channel.”

Why it matters:

This keeps the bot compliant with E‑E‑A‑T and YMYL expectations by grounding answers in your own, audited content.

Don’t just hide the bot in a corner. Implement “Contextual Triggers” where the LLM-powered bot pops up with a specific question related to the section the user is reading. For example: “Would you like to see how the Zenshin NK5020-58P compares to other titanium watches?”

Goal:

Make the bot feel part of the page, not a generic pop‑up.

Implementation:

Use page‑level triggers:

On a product page (e.g., Zenshin NK5020‑58P titanium watch), show:

“Would you like to see how the Zenshin NK5020‑58P compares to other titanium watches?”

On a hearing‑aid blog, show:

“Want a quick comparison of these models for your daily lifestyle?”

Best practice:

Tie the bot’s trigger copy to the current entity (product name, condition, or campaign) so the LLM can naturally fetch internal‑link‑style follow‑ups.

Step 3: Intent Capture for Personalization

Program the bot to identify user intent. Ask the LLM to categorize queries as “Learning,” “Comparing,” or “Buying.” This allows the bot to serve the exact next piece of content (Internal Link) that the user needs, drastically reducing bounce rates.

Goal:

Turn chats into a live intent‑map that powers your IA and SEO.

Prompt the LLM to:

“Classify the following user message as one of: Learning, Comparing, Buying, or Support. Output only the intent label and the main entity requested (e.g., product name, condition, or page topic).”

Then route:

Learning → recommend blog posts, FAQ, or explainer pages.

Comparing → pull comparison tables or product‑cluster pages.

Buying → direct‑to‑product page, pricing, or booking form.

This routing can drop internal links into the chat, which both reduces bounce and signals to crawlers that those pages are topically relevant.

Use the LLM to generate “live” FAQs based on what users are actually asking your bot. If 50 people ask about “water resistance,” the LLM can flag this to you, allowing you to update your main SEO copy to reflect real-time search demand.

Goal:

Let user chats guide your SEO‑content roadmap.

Workflow:

Log the most frequent questions and intents (e.g., 50 people ask “water resistance”).

Ask the LLM:

“Based on the logged user questions, what are the top 3 missing H2/H3 sections or FAQ questions that should be added to the main page about [topic]?”

Then:

Update the main SEO copy with those questions and answers, and re‑publish.

This keeps your content aligned with real‑time “search demand‑like” queries, improving both rankings and AI‑answer quality.

Replace clunky forms with conversational data collection. Use the LLM to qualify leads through natural chat, then pass that structured data into your CRM. This improves user experience while providing your sales team with rich, semantic context.

Goal:

Turn conversations into structured, CRM‑ready leads.

Pattern:

Let the LLM qualify via chat:

“What are you mainly looking for—an everyday hearing aid, a sports‑compatible model, or a solution for children?”

Then ask:

“May I get your name, phone number, and preferred clinic location so we can send you a tailored recommendation?”

Behind the scenes:

Use an LLM to parse the conversation into JSON (name, email, product interest, budget range, clinic location) and pipe it into your CRM or form webhook.

Tools like modern AI‑chatbot platforms already support this “AI‑chat‑form → CRM” pipeline, reducing friction and improving UX.

Conversational UX SEO 2026 is the bridge between a simple website and a comprehensive brand experience. When users spend more time talking to your site, search engines view your domain as an ultimate authority. By making your content interactive, you don’t just improve your SEO; you build a relationship with your customer before they even leave the page.

Q1. Does a chatbot really help with SEO?

Yes. By increasing “dwell time” (the amount of time a user stays on your page), you send a strong signal to search engines that your content is highly relevant and engaging.

Q2. Will a bot slow down my site?

In 2026, modern LLM integrations use asynchronous loading and edge computing, meaning they provide deep intelligence without compromising PageSpeed scores.

Q3. How do I prevent the bot from “hallucinating”?

By using Retrieval-Augmented Generation (RAG), you ground the LLM in your own verified data, ensuring it only speaks from your brand’s “truth.”

Q4. Can the chat history be used for SEO?

Absolutely. Analyzing chat logs helps you identify “Content Gaps”—keywords and topics your audience cares about that you haven’t written about yet.

Q5. Is this better than a standard FAQ section?

Yes, because it is personalized. A standard FAQ is static; a conversational bot answers the specific nuance of the user’s individual question.

#UXDesign #SEO2026 #AIAutomation #ConversionRateOptimization #TejomInsights

Brand accounts are seeing a 30% reach drop. It’s time for a Founder-Led Social Strategy. Learn how to humanize your brand and skyrocket engagement.

If you can’t track it, don’t scale it. This guide breaks down the UTM tracking and engagement mapping strategies used by top brands to ensure influencer ROI in 2026.

Traditional agencies are failing. Learn how to transform your brand into a Creator Engine with a future-proof 2026 social strategy focused on authenticity.

Search engines in 2026 value engagement over everything. This guide explains how to implement conversational UX SEO 2026 by using LLM-powered bots to provide instant answers, increasing site stickiness and proving your authority to AI search crawlers.

Search engines in 2026 value engagement over everything. This guide explains how to implement conversational UX SEO 2026 by using LLM-powered bots to provide instant answers, increasing site stickiness and proving your authority to AI search crawlers.

In 2026, the secret to ranking isn’t just using AI—it’s how you supervise it. This guide breaks down the essential SEO content frameworks 2026, showing you how to integrate LLM drafts with human-led E-E-A-T verification to create high-ranking, high-trust content that survives any algorithm update.

Regional markets are the new goldmines. This guide breaks down the regional influencer marketing strategy you need to dominate Kolkata, Odisha, and the North East.

In 2026, the secret to ranking isn’t just using AI—it’s how you supervise it. This guide breaks down the essential SEO content frameworks 2026, showing you how to integrate LLM drafts with human-led E-E-A-T verification to create high-ranking, high-trust content that survives any algorithm update.

This blog covers Planning, Budgeting, and Measuring Influencer Campaigns to help brands boost 2025 ROI.

Trustea sustainability code for Indian tea: real impact, branding, and digital change in India’s tea sector.

Tribal Branding Catalyst builds loyal communities that drive startup growth and supercharge the flywheel marketing model.

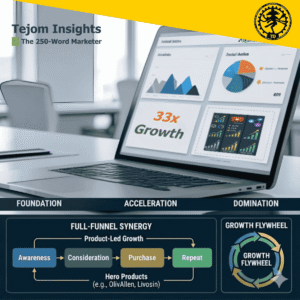

Actionable insights on digital marketing funnel vs flywheel model to optimize growth and retention.

Struggling to rank your blog? Learn 11 actionable SEO strategies to improve local search results for blogs and get found by the right audience.

Learn how to harness Google Ads for e-commerce in India with expert strategies that boost traffic and conversions.

Proven strategy on how to build online presence for a real estate company through websites, listings, social media, advertising, and influencer campaigns.

Google Ads Management Hacks can boost your ROI in no time. Discover actionable, quick-win strategies you can use today with Google Ads management hacks.